Formerly known as Global Research & Risk Solutions

The data transformation imperative for banks

FRTB needs streamlining market, sensitivities and reference data, setting up stronger audit trails

Overview

The Fundamental Review of the Trading Book (FRTB) represents a pivotal regulatory effort to strengthen market risk management and the capital framework governing the trading activities of banks. While some jurisdictions are already in advanced stages of implementing FRTB, others have deferred or recalibrated their deadlines.

Banks have to decide on choosing between the standardized approach (SA) and the internal model approach (IMA) in FRTB. The choice in the approach, together with variations in local rules, means banks will encounter markedly different capital and operational consequences across regions. This diversity underscores the importance of robust market risk management practices to navigate the evolving regulatory environment.

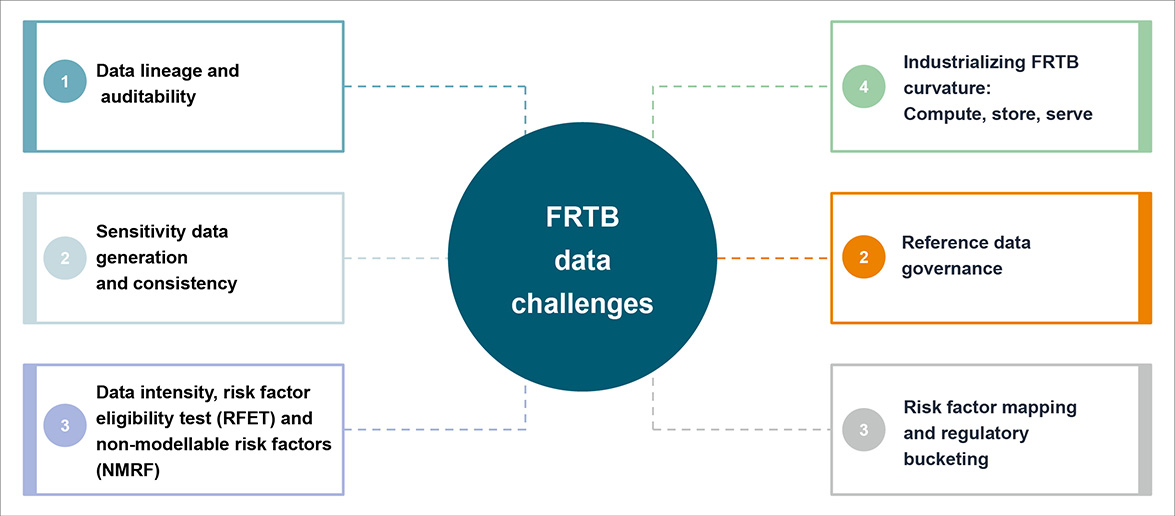

Key data challenges

Data challenges and solutions

Data lineage and auditability

Supervisors are increasingly demanding transparent traceability. Under the SA framework, institutions must demonstrate traceability of the capital impact to its respective trade.

Many organizations rely on spreadsheet adjustments and manual mapping. As a result, lineage documentation becomes fragmented. Banks struggle to record evidence related to transformation logic and override approvals.

Solutions

Lineage metadata store: An enterprise lineage repository catalogues every data asset, transformation and downstream consumption with technical and business metadata.

Immutable audit log: All market data overrides, model parameter changes, and sensitivity adjustments written to an append-only audit ledger.

Point-in-time replay: Historical market data snapshots retained in immutable storage. Capital figures can be fully reproduced for any business date using the versioned data state and model version on that date.

Regulatory report explainer: A self-service tool enabling risk officers and regulators to drill down from a capital line item through to the constituent sensitivities, market data observations and the respective instrument attributes.

Sensitivity data generation and consistency

Institutions must generate delta, vega and curvature sensitivities consistently across asset classes. Large banking organizations typically operate multiple pricing and risk engines that support multiple asset classes and business lines. Systems such as Murex or Calypso Technology often coexist alongside proprietary pricing libraries developed by quantitative teams.

Each platform may apply a different yield curve construction logic, use varying volatility surface interpolation methods, and implement unique shock sizes or calibration conventions.

Banks struggle with lack of standardized shock definitions: Sensitivity must be calculated to prescribed risk factor shocks (e.g., 1 basis point (bp)/1% shift in rates, parallel vs bucketed shocks). In practice, different shock sizes and directions may lead to one engine computing interest-rate deltas using a 1 bp parallel shift per curve and another using bucketed key rate shifts of 5 bps.

To avoid timing mismatches, sensitivities must be calculated using a consistent market data snapshot (or at least a tightly controlled window) across all desks and asset classes.

Solutions

Deploy a sensitivity calculation engine (SCE) as a shared source consumed by front-office profit and loss, risk management and regulatory capital pipelines.

Create a golden source of market data from where all departments can consume the market timeseries data.

Implement a sensitivity reconciliation framework with automated daily break detection: Any delta, vega or curvature break >0.5% vs prior run or cross-system to trigger an automated investigation workflow.

Establish an intelligent control framework: A composite metric of completeness, accuracy and timeliness with respect to each day’s sensitivity calculation process.

Data intensity, RFET and NMRF

For implementing FRTB IMA, risk factor eligibility test (RFET) mandates banks to prove that every risk factor used in capital models is supported by “real price observations” that meet either of the thresholds for a minimum count and a maximum gaps over a 12-month period.

Non-modellable risk factors (NMRFs) attract a separate capital charge computed via stress scenarios on those factors, so poor data coverage directly increases capital and forces governance on when to add proxies, pool data with other banks, and/or rely on vendor prices.

These data quality issues emerge during RFET assessment:

- Fragmented data sourcing: Bloomberg and Refinitiv data feeds are ingested separately, with no deduplication

- No real price observation (RPO) eligibility filter: Indicative broker quotes are mixed with executable prices in the historical database

- 90-day gap breaches for illiquid tenors

Explore Crisil, a company of S&P Global

Explore Crisil, a company of S&P Global